Some of the database components can’t be installed unless you install a pre-requisite components first prior to installing it.

Here I am listing all of them for guidance:

Some of the database components can’t be installed unless you install a pre-requisite components first prior to installing it.

Here I am listing all of them for guidance:

One of the new exciting features of Oracle 12c is the ability to restore a Table using RMAN which was not possible in previous Oracle releases.

To implement this there are pre-requisites:

Let us proceed in testing:

I will create a new dummy table based on actual table existing in the database:

SQL> create table TESTSCHEMA.DUMMY_TABLE as select * from TESTSCHEMA.EMPLOYEE_INFO;

Now check the current SCN number and use it for the command later on:

SQL> select dbms_flashback.get_system_change_number from dual;

OR use this query:

SQL> select current_scn from v$database;

OR check the SCN generated based on the tablespace backup:

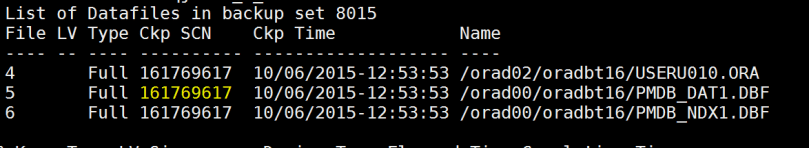

RMAN> list backup;

OR

Use the following command to check the backup of the data file associated with the tablespace:

This query will report the FILE_ID number that can be used with the below RMAN command to get the SCN for the tablespace:

select TABLESPACE_NAME,FILE_ID from dba_data_files;

then,

rman target /

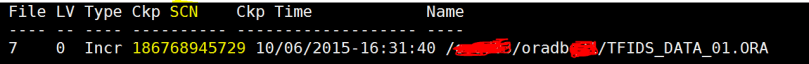

RMAN> LIST BACKUP OF DATAFILE 7;

***Before Dropping the table make sure you have a valid successful backup:

RMAN> backup database;

Then drop the newly created table:

SQL>Drop table TESTSCHEMA.DUMMY_TABLE;

Command to recover the table:

recover table ‘TESTSCHEMA’.’DUMMY_TABLE’ until scn 161451617 auxiliary destination ‘/oracle/app/’;

You need to wait as it will take time with many messages that is going to be generated….

Important Remark: you will notice messages about AUX Database being shutdown and startup, DON’T PANIC (this is not the database you are working on it, from behind the scene an auxiliary database is being created while table restoration is taking place).

You can safely remove the files generated under the “auxiliary destination” directory.

****The following are another alternatives for the same action:

A. You can also recover the table to be exported as a dump file (where you can import the table later on ) :

recover table ‘ TESTSCHEMA ‘. ‘DUMMY_TABLE’ until scn 161773617 auxiliary destination ‘/oracle/app/’ DATAPUMP DESTINATION ‘/oracle/export/’ DUMP FILE ‘table_recovery_dump.dmp’ notableimport;

B. You can recover the table to be renamed to a different name while recovered:

recover table ‘TESTSCHEMA’.’DUMMY_TABLE’ until scn 161773617 auxiliary destination ‘/oracle/app/’ remap table TESTSCHEMA.DUMMY_TABLE:DUMMY_TABLE_TEMP;

Here I am exploring two new Oracle datapump parameters that have been introduced in Oracle 12cR1 (12.1.0.2). They are: views_as_tables & logtime.

views_as_tables : will allow you to export the specified schema views as tables.

Logtime: will provide timestamp for each operation being performed while pump operations is running (in my opinion: very useful).

Export datapump command using both parameters example:

nohup $ORACLE_HOME/bin/expdp “‘/as sysdba ‘” DIRECTORY=PUMP_DIR DUMPFILE=test_view_as_table.dmp views_as_tables=SM_DEV.ANAL_COMP_VIEW logfile=export_table_as_view.log logtime=all &

***** If you face the below error while performing expdp with “logtime” parameter:

UDE-31623: operation generated ORACLE error 31623

ORA-31623: a job is not attached to this session via the specified handle

ORA-06512: at “SYS.DBMS_DATAPUMP”, line 3905

ORA-06512: at “SYS.DBMS_DATAPUMP”, line 5203

ORA-06512: at line 1

***** To resolve this problem I have checked the following:

1.I have checked that the streams parameter and it was beyond 40M which is OK.

select * from dba_registry;

3. The final resolution was to unset NLS_LANG environment variable.

Which solved my error problems!!!

To return back to our initial thread, When you check the content of the log file the time stamps are included which is useful to check the process that is consuming more time.

here is an example of export logfile with time stamp dates added:

In Oracle Database releases 11.2.0.4 and 12c this error (ORA-28405) will be generated, the reason behind that is that Oracle imposed extra restrictions when you grant secure OR password protected role to a normal role OR (password protected role).

****** To simulate this:

connect / as sysdba

SQL>create role RL1 identified by hello23;

SQL>grant create session to RL1;

SQL>create role RL2 identified by bye246;

SQL>grant create session to RL2;

SQL>grant RL1 to RL2;

ORA-28405 Cannot Grant Secure Role To Another Secure Role

There is a workaround solution which is to set parameter _grant_secure_role = true

SQL> alter system set “_grant_secure_role”=true scope=spfile;

I received the below errors while trying to recovery one of my standby databases that i am supporting:

SQL> RECOVER AUTOMATIC STANDBY DATABASE;

ORA-00283: recovery session canceled due to errors

ORA-00333: redo log read error block 104448 count 2048

SQL> recover managed standby database disconnect from session;

Media recovery complete.

SQL> shutdown immediate;

SQL> startup mount;

SQL> ALTER DATABASE MOUNT STANDBY DATABASE;

ORA-01153: an incompatible media recovery is active

*** solution:

SQL>STARTUP NOMOUNT

SQL>ALTER DATABASE MOUNT STANDBY DATABASE;

SQL> SELECT open_mode,database_role FROM v$database;

OPEN_MODE DATABASE_ROLE

——————– —————-

MOUNTED PHYSICAL STANDBY

SQL> RECOVER AUTOMATIC STANDBY DATABASE;

Sometimes you need to roll back the applied Oracle database PSU (Patch Set Update) for whatever the reason is. So you need to know how to de-install the patch applied in quick and swift way especially if you are doing it in production environment.

On the other hand, a DBA shouldn’t resort to this unless there is a big impact on production applications since in a controlled IT change environments patches are usually applied first in test, QA, and then production and tested during these stages.

Also, another important information to mention is that Oracle is now releasing 2 patches ( 1 database, and 1 for JAVA component) every quarter. And the Java Component patch sometimes could lead to application problems with specific vendors so you need to be careful.

The following is general overview procedure in how to roll back a database patch (either for database or Java component), in my example I am referring to JULY 2015 Oracle release quarterly security patch. It’s very important that you read the (readme.html) accompanied with the patches downloaded.

1.de-installing the database patch:

lsnrctl stop LISTENER_TESTDB

sqlplus ‘/as sysdba’

SQL> shutdown immediate

**** Then run the Opatch utility to roll back the applied patch:

cd /app/oracle/downloaded_patch/july2015/20831110

opatch rollback -id 20831110

You should receive message similar “OPatch completed successfully”

If you have warning then you can check the logs in the directory specified in the output.

sqlplus ‘/as sysdba’

startup

cd $ORACLE_HOME/OPatch

./datapatch –verbose

*** To verify that the patch is rolled back successfully:

select * from dba_registry_sqlpatch where PATCH_ID=20831110;

Important Remark:

My recommendation is to use utilrip after that to re-compile all database objects:

@$ORACLE_HOME/rdbms/admin/utlrp.sql

Also checking that all database components are vaild post de-instillation:

Select * from dba_registry;

lsnrctl start LISTENER_TESTDB

********************************************************************

2.de-installing the Java patch:

lsnrctl stop LISTENER_TESTDB

SQL> shutdown immediate

*** Then run the Opatch utility to roll back the applied patch:

cd /app/oracle/downloaded_patch/july2015/JAVA/21068507

opatch rollback -id 21068507

sqlplus ‘/as sysdba’

SQL> startup upgrade ;

SQL>exit

cd $ORACLE_HOME/OPatch

./datapatch –verbose

You will receive a message similar to this:

Patch 21068507 rollback: SUCCESS

SQL> shutdown immediate;

SQL> startup

Don’t forget to startup the listener:

lsnrctl start LISTENER_TESTDB

*** To verify that the patch is rolled back successfully:

select * from dba_registry_sqlpatch where PATCH_ID=21068507;

Important Remark:

My recommendation is to use utilrip after that re-compile all database objects:

@$ORACLE_HOME/rdbms/admin/utlrp.sql

Also checking that all database components are valid post de-instillation:

Select * from dba_registry;

I hope this would help…..

A new “compression algorithm” parameter has been introduced in 12c release for export datapump utility, the parameter is: COMPRESSION_ALGORITHM .

There are three modes for this new compression: basic, low, medium, high

The default mode is “basic”.

** Here i am going to explore exporting the same schema in 3 different ways for testing purpose from a 12c database:

Exporting the schema using datapump command with “no compression”:

$ORACLE_HOME/bin/expdp “‘/as sysdba ‘” DIRECTORY=DUMP_DIR SCHEMAS=TEST_SCHEMA DUMPFILE=DUMP_NO_COMPRESSION.dmp logfile=DUMP_NO_COMPRESSION_EXP.log logtime=all

Exporting the schema using datapump command using the new compression parameter in “HIGHT” mode:

$ORACLE_HOME/bin/expdp “‘/as sysdba ‘” DIRECTORY= DUMP_DIR SCHEMAS= TEST_SCHEMA DUMPFILE=DUMP_HIGHT_COMPRESSION.dmp COMPRESSION=ALL COMPRESSION_ALGORITHM=HIGH logfile=DUMP_HIGH_COMPRESSION_EXP.log logtime=all

Exportng the schema using datapump command using the new compression parameter in “MEDUIM” mode:

$ORACLE_HOME/bin/expdp “‘/as sysdba ‘” DIRECTORY= DUMP_DIR SCHEMAS= TEST_SCHEMA DUMPFILE=DUMP_MEDIUM_COMPRESSION.dmp COMPRESSION=ALL COMPRESSION_ALGORITHM=MEDIUM logfile=DUMP_MEDIUM_COMPRESSION_EXP.log logtime=all

The conclusion is the following:

Important remark: the normal export took around 7 minutes to finish however the highly compressed export process took around 1 hour !!!

While using medium compression algorithm it took 16 minutes.

This was performed on IBM AIX environment for Oracle 12.1.0.2, of course the “server resources” is a factor while performing compression in addition to the “data types” of the schema exported is another factor to be considered.

—————-

One of the fundamental questions for the Oracle DBA who is exploring Oracle 12c new multi-tenant architecture is how to create a pluggable database.

here i am exploring 2 methods……Let us start.

Method 1: using SQL Developer

After connecting to the database, you need to add the “DBA” option.

In sql developer go to “view”——–> then choose “DBA.

Then add the already defined database connection:

And choose “OK”

Then, right click on “Container Database” and choose “Create_Pluggable Database” option

The associated SQL definition output:

CREATE PLUGGABLE DATABASE DUMMY_PDB ADMIN USER DUMMY_ADMIN IDENTIFIED BY dummy55

FILE_NAME_CONVERT=(

‘/oracle/DCE/DCE/pdbseed/’, ‘/oracle/DCE/DCE/dummypdb/’

)

STORAGE (

MAXSIZE 50M

MAX_SHARED_TEMP_SIZE UNLIMITED

)

When I hit “Apply”, I received the error ORA-65113: value of MAX_PDB_STORAGE property for the PDB is too low

When I increased the value to 900 MB, the execution was successful

You will find that the new pluggable database has been created successfully:

Method 2: using Traditional SQL Statement

—Creating Pluggable database from “seed”

SQL> CREATE PLUGGABLE DATABASE sales_pdb

ADMIN USER dbapower IDENTIFIED BY fofo431

ROLES = (dba)

DEFAULT TABLESPACE TS_SALES_DATA_01

DATAFILE ‘/oracle/DB45/sapdata1/sales01.dbf’ SIZE 100M AUTOEXTEND OFF

FILE_NAME_CONVERT = (‘/oracle/DB45//DB45/pdbseed/’,

‘/oracle/DB45//DB45/salespdb/’)

STORAGE (MAXSIZE 1G)

PATH_PREFIX = ‘/oracle/DB45/DB45//salespdb/’;

I have received the below error when I tried using sqlplus on IBM AIX server with Oracle 12c installed on it and upgraded:

sqlplus ‘/as sysdba’

exec(): 0509-036 Cannot load program sqlplus because of the following errors:

rtld: 0712-001 Symbol CreateIoCompletionPort was referenced

from module /oracle/oradb/product/12.1.0/lib/libttsh12.so(), but a runtime definition

of the symbol was not found.

rtld: 0712-001 Symbol GetMultipleCompletionStatus was referenced

from module /oraclex/oradbp07/product/12.1.0/lib/libttsh12.so(), but a runtime definition

of the symbol was not found.

rtld: 0712-002 fatal error: exiting.

Solution

Change the IOCP IBM AIX parameter from “defined” to “available”, this action requires root privilege

# smitty iocp

Select Change / Show Characteristics of I/O Completion Ports.

Change configured state at system restart from Defined to Available

Remark: it is a prerequisite to change IOCP parameter before upgrading the database to 12c in IBM AIX environment.

I hope this helps………

I have been awarded the Oracle ACE Associate Award, this award is given to elite technical professionals in Oracle technology, for their expertise in addition to their contribution within Oracle community.

I am very happy to say that i am the First Saudi National IT Professional to receive such distinction 🙂 , and among the best of Oracle ACE’s from around the world.

My Oracle ACE Profile:

https://apex.oracle.com/pls/apex/f?p=19297:4:::NO:4:P4_ID:13500

Oracle ACE News Letter for the Month of September 2015:

الحمد لله رب العالمين