There are many ways to migrate your Oracle database, here I am exploring transportable export/import method that is fast and efficient.

I will be migrating a pluggable database from windows OS to Linux which both of them has “little” endian….so there will be no conversion process taking place:

SELECT d.PLATFORM_NAME, ENDIAN_FORMAT

FROM V$TRANSPORTABLE_PLATFORM tp, V$DATABASE d

WHERE tp.PLATFORM_ID = d.PLATFORM_ID;

Verify that User/Application tablespaces are self contained:

The following check will ensure from physical and logical side that there are no dependency user objects stored under SYSTEM,SYSAUX tablespaces.

SQL> alter session set container=PDB_TEST;

SQL> EXECUTE DBMS_TTS.TRANSPORT_SET_CHECK(‘FAST_START_TBS,TS_NE,TS_TESTING,TABLESPACE_DUMMY,TS_SCHEMA,TS_SPECIAL_SCHEMA’, TRUE);

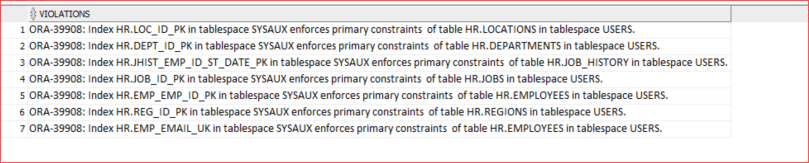

SQL> SELECT * FROM TRANSPORT_SET_VIOLATIONS;

As shown above the query is showing violations …..for example: index REG_ID_PK under HR schema is stored in SYSAUX tablespace, what I am going to do is to migrate this index to USERS tablespace.

SQL> ALTER INDEX HR.REG_ID_PK REBUILD TABLESPACE USERS;

To repeat the same process to other indexes…you can use the following wrapping SQL:

SQL> select ‘alter index ‘||OWNER||’.’||INDEX_NAME||’ REBUILD TABLESPACE USERS;’ from dba_indexes where OWNER=’HR’ and tablespace_name=’SYSAUX’;

After successfully finishing the cleanup proceed to the next step.

Create a directory in the source database:

SQL> CREATE OR REPLACE DIRECTORY export_dir AS ‘C:\18c_vm\export_dir’ ;

SQL> grant read,write on directory export_dir to system;

Set the source tablespaces in “read-only” mode, this can be done by executing the following wrap statement:

SQL> select ‘alter tablespace ‘ || tablespace_name || ‘ read only;’ from dba_tablespaces where tablespace_name not in (‘SYS’,’SYSTEM’,’UNDOTBS1′,’TEMP’,’SYSAUX’);

expdp system/XXXX@//localhost:1521/pdb_origin full=y transportable=always directory=export_dir dumpfile=pdb_tts.dmp metrics=y exclude=statistics compression=all logfile=pdb_tts_export.log

After that, copy the dump file and the data file to the destination database server.

All of the following steps will be performed in the destination (Linux) server:

create a directory for the destination pluggable database:

mkdir /opt/oracle/oradata/ORCLCDB/PDB_TEST

add the following PDB service in the tnsnames.ora file:

PDB_TEST =

(DESCRIPTION =

(ADDRESS = (PROTOCOL = TCP)(HOST = 0.0.0.0)(PORT = 1521))

(CONNECT_DATA =

(SERVER = DEDICATED)

(SERVICE_NAME = PDB_TEST)

)

)

create the pluggable database in the destination database server:

CREATE PLUGGABLE DATABASE “PDB_TEST” ADMIN USER “admin” IDENTIFIED BY “XXXX”

FILE_NAME_CONVERT=(

‘/opt/oracle/oradata/ORCLCDB/pdbseed/system01.dbf’, ‘/opt/oracle/oradata/ORCLCDB/PDB_TEST/SYSTEM01.DBF’,

‘/opt/oracle/oradata/ORCLCDB/pdbseed/sysaux01.dbf’, ‘/opt/oracle/oradata/ORCLCDB/PDB_TEST/SYSAUX01.DBF’,

‘/opt/oracle/oradata/ORCLCDB/pdbseed/undotbs01.dbf’, ‘/opt/oracle/oradata/ORCLCDB/PDB_TEST/UNDOTBS01.DBF’,

‘/opt/oracle/oradata/ORCLCDB/pdbseed/temp012019-02-04_19-34-58-889-PM.dbf’, ‘/opt/oracle/oradata/ORCLCDB/PDB_TEST/TEMPD.DBF’

)

STORAGE UNLIMITED TEMPFILE REUSE;

within the pluggable database PDB_TEST define the dump directories as follows:

sqlplus / as sysdba

SQL> alter session set container=PDB_TEST;

SQL> alter database open;

SQL> CREATE DIRECTORY dump_dir AS ‘/opt/oracle/oradata/ORCLCDB/PDB_TEST/DUMP’;

SQL> GRANT read, write on directory dump_dir to system;

I will now perform the import operation using transportable tablesapce:

impdp system/XXXX@pdb_test directory=dump_dir dumpfile=PDB_TTS.DMP logfile=full_tts_imp.log metrics=y transport_datafiles=’/opt/oracle/oradata/ORCLCDB/PDB_TEST/DUMP/USERSD01.DBF’

Now, I will perform post-migration verification:

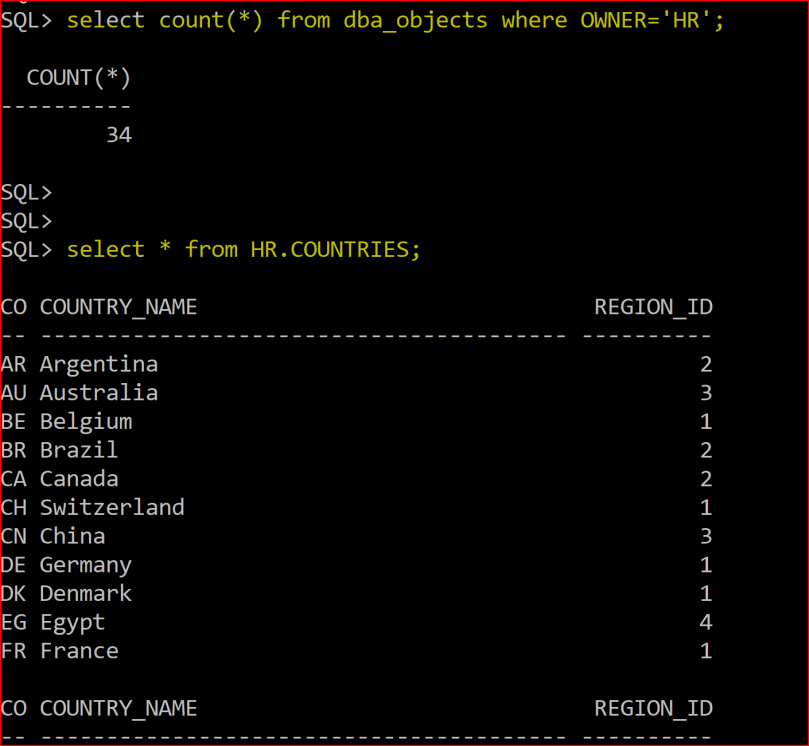

As shown above the tablespace USERS is created

As shown above HR schema was created, and I was able to perform a select statement to view the table HR.COUNTRIES !

Since this is a demo, I will put the tablesapce in the “SOURCE” windows database environment back to read,write mode:

SQL> alter tablespace USERS read write;

Final Notes:

Putting source database user tablespaces to “read-only” means outage…as the system/application is expected to perform both read,write operations. If the data transported is huge then its important to reduce the cut-off time by taking RMAN incremental backups then switching the tablespaces to read-only for the last and final incremental backup as this will minimize down-time.

AWR database is not moved using transportable tablespaces, to move it go the source database instance and perform the following:

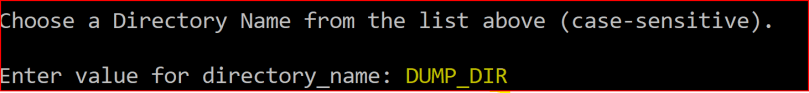

SQL> @?/rdbms/admin/awrextr.sql

Enter value for dbid: 2331138178

Enter value for num_days: 7

Enter value for begin_snap: 355

Enter value for end_snap: 382

Enter value for directory_name: DUMP_DIR

After that I will copy the dump file to the destination database server:

cp AWRDAT_355_382.DMP /opt/oracle/oradata/ORCLCDB/PDB_TEST/DUMP

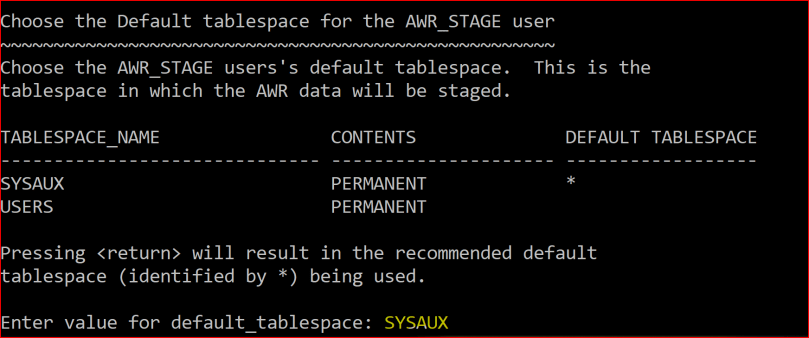

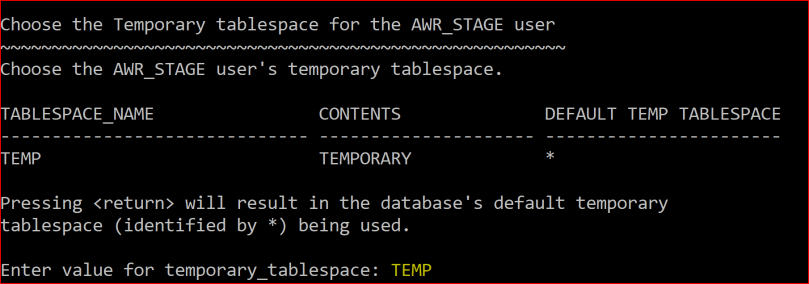

AWR data will be transfarred from the staging schema AWR_STAGE to the AWR tables in SYS schema.